Learning Robust Perception for Control

Analyzing and mitigating the impact of adversarial noise on CNN-based autonomous driving perception.

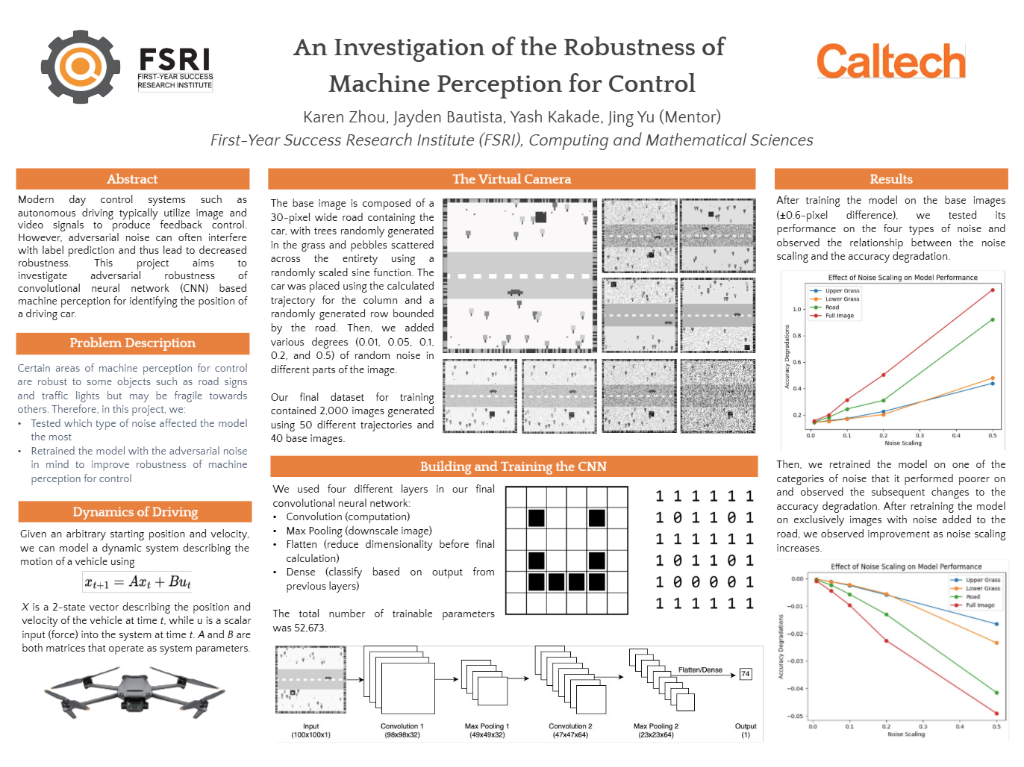

The Problem

We conducted research on the vulnerability of CNN-based perception systems to adversarial noise, specifically in the context of autonomous driving. Using Python and TensorFlow, we developed a custom CNN architecture with 52,673 parameters and evaluated its performance on a dataset of 2,000 images. Our analysis revealed a critical 40% reduction in accuracy when adversarial noise was introduced to road areas. To mitigate this, we retrained the model with noise-aware strategies, successfully improving vehicle position identification accuracy by 20% and demonstrating enhanced robustness for machine perception.

Approach

The core of the solution involves a custom pipeline built with Python, TensorFlow, Pandas, Matplotlib. We prioritized modularity and performance, ensuring the system can run in real-time constraints.

Results